What are AI-Powered Voice Assistants? 2026 Guide to B2B Scaling

In the 2026 B2B landscape, the phrase "voice assistant" has undergone a radical transformation. For years, it conjured images of simple consumer gadgets used for setting kitchen timers or checking the weather. Today, for founders and RevOps leaders in the DACH region, AI-powered voice assistants have evolved into "Agentic" systems, autonomous entities capable of managing complex sales pipelines, qualifying leads, and executing backend workflows without human intervention.

As businesses look to stay lean while scaling, the shift from basic command-response tools to an integrated AI voice control system has become a primary driver of competitive advantage.

1. Defining the 2026 AI-Powered Voice Assistant

At its core, an AI based voice assistant is a software agent that uses a multi-layered stack of technologies to interpret, process, and respond to human speech. However, the "intelligence" part of the equation has changed. Unlike the rigid, script-based IVR (Interactive Voice Response) systems of the past, today’s assistants utilize Large Language Models (LLMs) and "Native Multimodal" architectures to understand nuance, intent, and even emotional subtext.

The Technical Anatomy of Voice Control Artificial Intelligence

To move beyond basic commands, these systems rely on a 2026-standard "Sense-Think-Act" loop:

Automatic Speech Recognition (ASR): Modern ASR now hits 95% accuracy even in noisy environments, converting acoustic vibrations into digital text.

Natural Language Understanding (NLU): This layer identifies "Intents" (what the user wants) and "Entities" (the details).

Agentic Reasoning: Instead of following a tree, the AI queries a "Knowledge Graph" or CRM to decide the next best action.

Neural Text-to-Speech (TTS): Using models like ElevenLabs Flash or Cartesia, the AI generates human-like responses with sub-100ms synthesis.

2. The Strategic Shift: AI Voice for Essential Services

In high-stakes industries, we are seeing the rise of AI voice for essential services. This refers to voice agents deployed in sectors where accuracy and availability are non-negotiable.

Healthcare & Clinical Documentation

In the DACH region, medical professionals are using voice control technology to combat burnout. AI assistants now listen to doctor-patient consultations and automatically extract symptoms to update Electronic Medical Records (EMR). This "ambient sensing" saves an average of 2.5 hours of admin time per day for practitioners.

Logistics & Supply Chain Triage

For logistics firms, AI-powered voice assistants handle the "First Mile" of communication. When a shipment is delayed, the AI doesn't just send a text; it calls the recipient, explains the delay using real-time data from the ERP, and offers a rescheduled window, all via a natural conversation.

3. Voice-Enabled Product Search Platforms in B2B

One of the fastest-growing segments in 2026 is voice-enabled product search platforms. In B2B e-commerce, where catalogs often contain 50,000+ SKUs with complex specifications, traditional search bars are failing.

By 2026, 71% of B2B buyers prefer using voice to research products before purchasing. A procurement manager can now say: "Find me all stainless steel valves compatible with the XB-500 series currently in stock in our Munich warehouse." The AI doesn't just show a list; it acts as a Personalized Shopping Agent, comparing prices and availability across multiple vendors. This "Zero-Click" discovery is boosting conversion rates by over 15% for early adopters.

4. AI Voice Assistant Development: The 300ms Rule

When it comes to AI-based voice assistant development, the biggest hurdle isn't "what" the AI says, but "how fast" it says it. In human conversation, we expect a response within 200-500 milliseconds. If an AI takes 2 seconds to "think," the conversation feels broken.

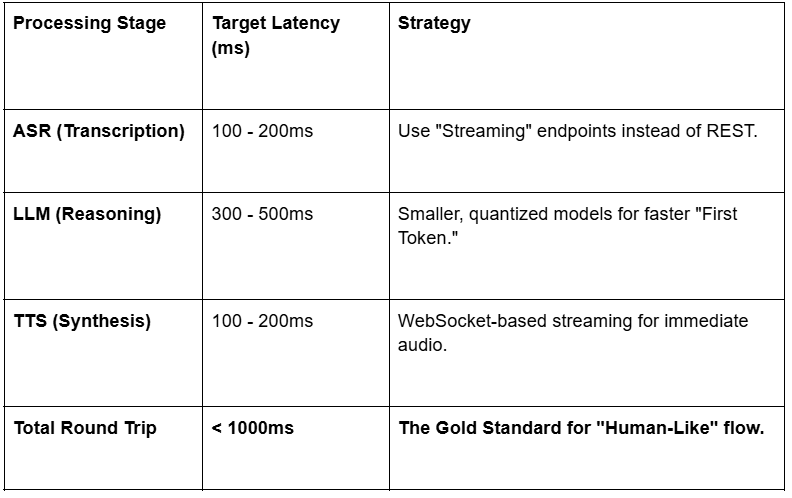

Latency Benchmarks for 2026

5. Best Practices for AI Assistant Audio Message Response

To ensure your virtual voice assistant builds trust rather than frustration, your team must apply these AI assistant audio message response best practices:

Implement "Barge-In" Capability: Users should be able to interrupt the AI. The system must immediately halt its audio playback the moment it detects user speech (Sub-200ms halt).

Use Filler Words Judiciously: To mask a 500ms processing lag, the AI can use "verbal cues" like "Let me check that for you..." to keep the user engaged.

Tone Matching: Modern voice control technology can now detect frustration in a user's tone. If a caller sounds angry, the AI should automatically soften its tone or offer a human handoff.

Contextual Persistence: The AI must remember that if a user said "I want the blue one" three minutes ago, "it" still refers to the blue product.

6. The "Maturity Model" for DACH Businesses

How "Voice Ready" is your organization? We categorize the adoption of an integrated AI voice control system into four levels:

Level 1: The Reactive Bot (Legacy)

Basic IVR systems. "Press 1 for Sales." These are being phased out in 2026 as they lead to high abandonment rates.

Level 2: The Conversational Assistant

Capable of answering FAQs and looking up order statuses. Most "Voice-First" SMEs are currently at this level.

Level 3: The Integrated Agent

The AI is connected to your CRM (Salesforce/HubSpot). It can book meetings, qualify leads based on "BANT" criteria, and update lead scores in real-time.

Level 4: The Autonomous Revenue Engine (The ScaleOS Standard)

The AI proactively calls dormant leads, conducts full discovery sessions, and uses voice control technology to manage the entire top-of-funnel without human oversight.

7. Overcoming Regional Challenges: The DACH Focus

For businesses in Germany, Austria, and Switzerland, AI voice assistant development faces unique hurdles that global platforms often overlook:

Dialect Recognition: Standard German models often fail with Swiss-German or Bavarian accents. Success in 2026 requires "Fine-Tuning" on regional datasets.

GDPR & Data Sovereignty: B2B voice data is highly sensitive. An integrated AI voice control system must offer EU-hosted processing and clear "Consent Management" protocols where the user is informed they are speaking to an AI.

Trust Gaps: Only 4% of businesses are truly "voice-ready." Leading with transparency and "Human-in-the-loop" fallbacks is essential for adoption.

8. The ROI of Voice: The 2026 Data

Why invest in a virtual voice assistant now? The data from the past two quarters is undeniable:

Cost Reduction: Automating routine inquiries (order tracking, password resets) reduces the "Cost Per Interaction" by 40%.

Revenue Expansion: Calls initiated through voice search or AI outreach show a 10x higher conversion rate than standard web leads.

Speed to Lead: In a market where 50% of buyers choose the vendor that responds first, a voice AI ensures a 0-second response time, 24/7.

Conclusion: Designing the Future of Sound

The era of "Basic Commands" is over. We have entered the era of the virtual voice assistant as a core member of the sales and operations team. By moving toward an integrated AI voice control system, you are building a business that is always on, always professional, and—most importantly, always listening.

The winners of 2026 won't be those who have the biggest call centers, but those who have the smartest, fastest, and most "human" AI voice engines.

Wanted to see how ScaleOS can build your autonomous voice engine?

Get a Voice-Ready Audit for your CRM right now.

Frequently Asked Questions

What is an AI Voice Assistant?

An AI voice assistant is an advanced software agent that uses Natural Language Processing (NLP) and Speech-to-Text (STT) technology to understand and respond to human speech. Unlike old-school automated phone menus that require you to "Press 1," an AI voice assistant understands natural conversation, context, and even the caller's intent. In a business setting, it acts as a digital team member that can handle customer inquiries, qualify leads, and perform tasks hands-free.

How to Make an AI Voice Assistant?

Creating a modern, enterprise-grade voice assistant involves four key technical layers:

The Ear (ASR): Use an Automatic Speech Recognition API (like Deepgram or OpenAI Whisper) to transcribe audio to text in real-time.

The Brain (LLM): Connect the text to a Large Language Model (like GPT-4o or Claude 3.5) to determine the meaning and the best response.

The Voice (TTS): Use a Text-to-Speech engine (like ElevenLabs or Play.ht) to turn the AI's written response back into a natural, human-like voice.

The Connection (Orchestration): Use tools like Vapi or Retell AI to link these layers together and connect them to a phone number or web interface.

How Do Digital Assistants Work by ScaleOS?

At ScaleOS, our digital assistants are built as "Agentic Systems." This means they don't just talk—they act.

Direct Integration: We wire the AI directly into your CRM (HubSpot/Salesforce) and calendar tools.

Autonomous Reasoning: When a prospect calls, the ScaleOS assistant identifies who they are, checks their history in your database, and decides whether to qualify them or book a meeting.

Real-Time Fulfillment: It can trigger workflows, such as sending a follow-up email or updating a lead status, immediately after the call ends.

DACH Localization: Our assistants are specifically optimized for the German-speaking market, handling regional nuances and strict GDPR privacy standards.

What is a Virtual Voice Assistant?

A virtual voice assistant is a broad term for any AI-powered interface that allows users to interact with a system using only their voice. While "Voice Assistants" are often consumer-facing (like Alexa), a Virtual assistant in a professional context usually refers to a more comprehensive system that can execute complex business tasks—such as technical support, clinical documentation, or automated outbound sales—without needing a physical device or a human operator.